What Is HappyHorse-1.0? What We Actually Know About the New AI Video Model

What Is HappyHorse-1.0? What We Actually Know About the New AI Video Model

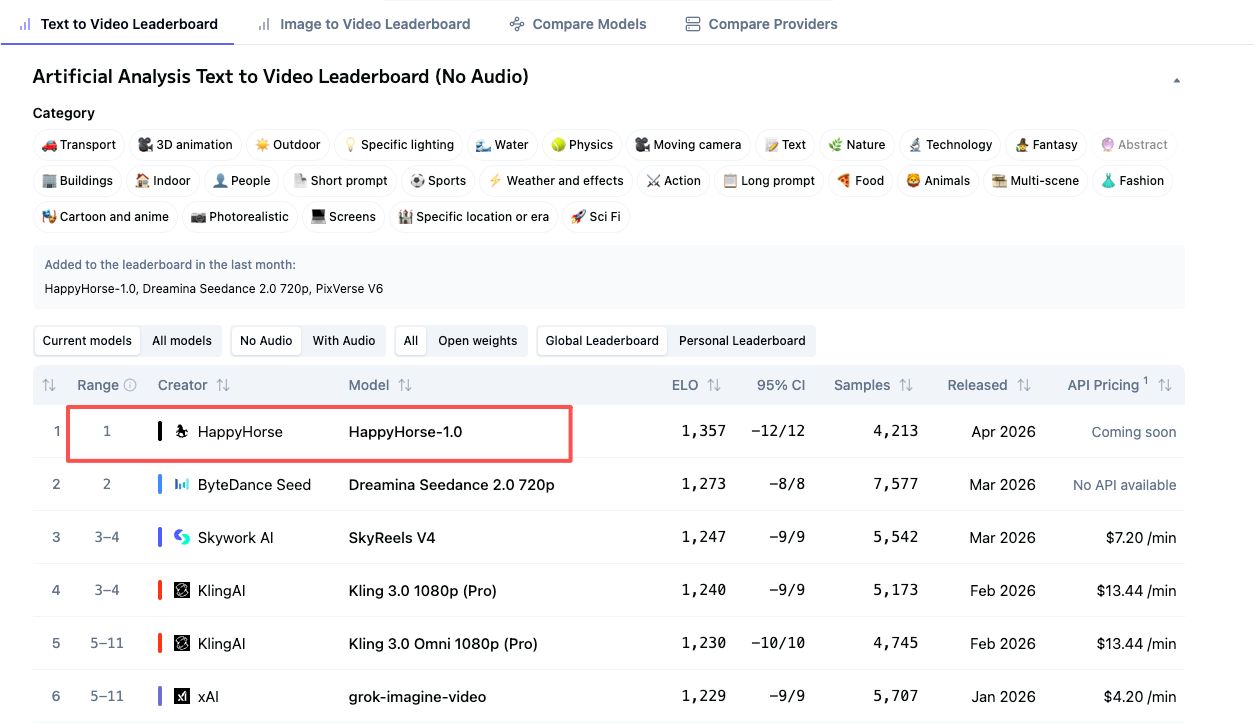

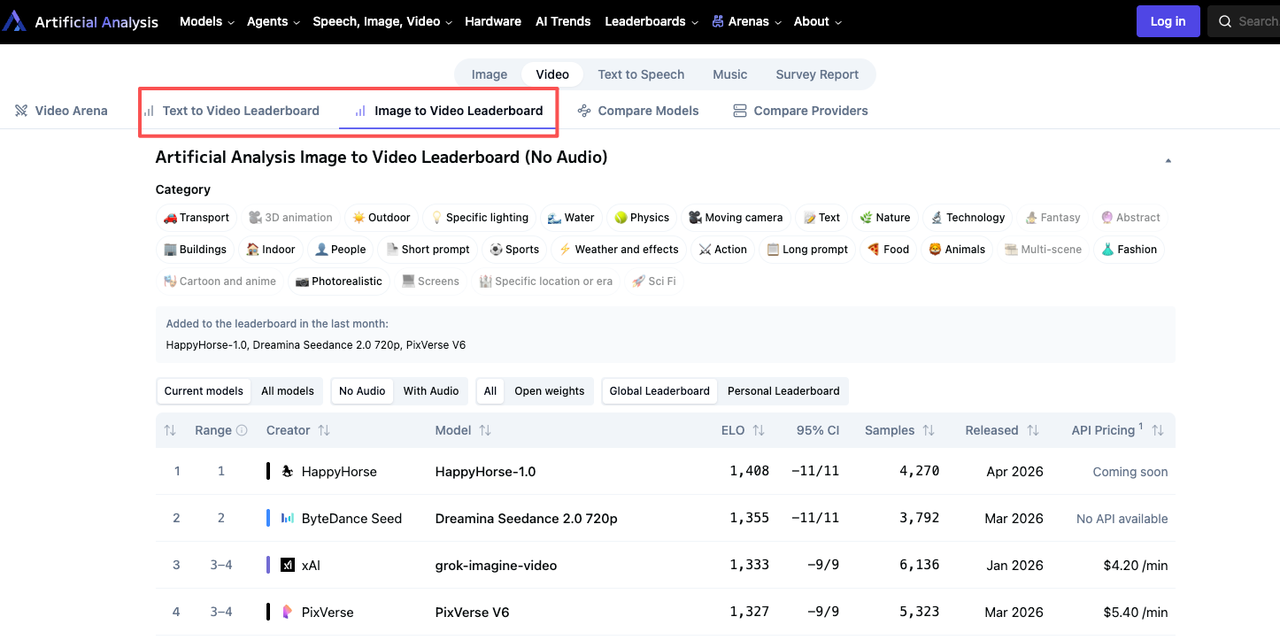

HappyHorse-1.0 is one of the most talked-about AI video models right now, but not for the usual reason. It did not arrive with a clean launch, a public team page, or a fully documented API. Instead, it started drawing attention because of how well it appeared to perform in blind-comparison rankings, while basic questions about access, authorship, and openness remained unanswered.

That combination is exactly why people are paying attention.

If you only skim the headlines, HappyHorse-1.0 sounds like the next obvious breakthrough in AI video. If you look more closely, the picture is more complicated. There are strong public signals that the model is worth watching, but there is still a meaningful gap between what appears to be true, what is being claimed, and what users can actually verify today.

This guide breaks that down in plain English.

Why people are suddenly talking about HappyHorse-1.0

HappyHorse-1.0 started showing up in conversations because it appeared near the top of video-model rankings that rely on blind user preference rather than self-reported benchmark claims. That matters because AI model launches often come wrapped in selective demos, carefully chosen comparisons, and marketing language that makes every release sound revolutionary.

A blind-comparison environment changes the signal. Users judge outputs side by side without knowing which model produced which result. When a previously unknown name performs unusually well in that setting, people notice quickly.

That is the main reason HappyHorse-1.0 moved from obscurity to serious discussion. It was not just another model page claiming better quality. It was a model that appeared to be winning attention before most people even knew who made it.

What HappyHorse-1.0 is supposed to do

Based on public-facing descriptions, HappyHorse-1.0 is positioned as a modern AI video model that can handle both text-to-video and image-to-video generation. It is also described as supporting audio-aware output and multilingual use cases, which is one reason it has attracted more curiosity than a standard video generator.

In practical terms, the pitch is straightforward. Instead of being framed as a narrow demo model, HappyHorse-1.0 is being presented as a more complete generation system for cinematic video creation, visual motion, and possibly synchronized audiovisual output.

That is the promise.

The more important question is how much of that promise can actually be confirmed.

What is actually confirmed right now

There are a few things that seem reasonably grounded in public evidence.

1. HappyHorse-1.0 has real visibility, not just isolated hype

This is not a case where a model appeared only on a single landing page and then got amplified by copycat blogs. It has shown up in multiple discussions around current AI video rankings, model comparisons, and access writeups. That alone does not prove quality, but it does show that it has crossed from niche curiosity into broader industry attention.

2. It is being discussed as a serious video model, not a toy demo

Most of the coverage around HappyHorse-1.0 treats it as a contender in the same conversation as higher-end AI video tools rather than as a novelty generator. The model is usually mentioned in contexts such as text-to-video quality, image-to-video capability, motion realism, and ranking performance.

That framing matters because it places HappyHorse-1.0 in the part of the market where expectations are higher. People are not asking whether it can make short clips at all. They are asking whether it might belong in the same tier as the strongest video models available today.

3. Public claims around the model are detailed enough to sound specific

One reason HappyHorse-1.0 has generated so much interest is that the claims around it are not vague. The public descriptions talk about architecture choices, multimodal generation, language support, and high-end output quality. Specificity does not equal truth, but it does mean the claims are concrete enough to be tested later.

That is very different from vague launch copy that only says a model is “next generation” or “state of the art.”

What is still unclear or unverified

This is where caution becomes necessary.

Team identity is still murky

For a model that has attracted this much attention, the lack of a clearly established team is unusual. There is a difference between a stealth launch and a normal early release. With many frontier AI products, even limited previews still come with a visible company, a research group, or a known lab. HappyHorse-1.0 has felt less straightforward than that.

That does not automatically mean something is wrong. It does mean readers should be careful not to fill the gap with certainty where only speculation exists.

The open-source story is not fully convincing yet

Some pages associated with HappyHorse-1.0 suggest that the model is open or heading toward open release. But in situations like this, the real question is simple: can people actually access the weights, the code, the license terms, and the documentation in a way that allows independent verification?

If the answer is still “not yet” or “not clearly,” then the model should not be treated as fully open in any practical sense, no matter how the marketing language sounds.

Technical claims are still mostly claims until they can be checked

Architecture descriptions, parameter counts, generation speed, audio-video synchronization, and multilingual capability can all be important. But until those claims are backed by reproducible access, public artifacts, or independent testing, they should be treated as provisional.

That is not skepticism for the sake of skepticism. It is just basic discipline. AI launches now move so fast that attractive technical claims can spread much faster than verifiable proof.

Access still matters as much as rankings

A model can be interesting and still not be usable.

That distinction is easy to lose when a new system starts climbing rankings or circulating through industry discussion. But for creators, product teams, and developers, access is not a minor detail. It is the difference between a model that is exciting to watch and a model that can actually be adopted.

If there is no stable API, no reliable documentation, and no clear release path, then the model is still more of a signal than a tool.

Why the hype matters — and why it is still not enough

HappyHorse-1.0 is worth paying attention to because output quality signals matter. If a previously unknown model starts outperforming expectations in blind-comparison settings, that tells you something real. It suggests that whatever is behind the name is not just empty branding.

At the same time, quality signal is only one part of the decision.

For actual users, the more useful question is not “Is this model exciting?” It is “Can I use it, trust it, and plan around it?” Those are different questions, and right now they do not all have equally strong answers.

That is why HappyHorse-1.0 sits in an interesting middle zone. It feels too important to ignore, but still too incomplete to describe as a fully established option.

Is HappyHorse-1.0 worth watching?

Yes — absolutely.

If you follow the AI video space closely, HappyHorse-1.0 is the kind of model you should keep an eye on. It has enough public momentum, enough quality-related interest, and enough unusual positioning to matter.

But “worth watching” is not the same as “ready to rely on.”

If you are a creator who only wants to know what frontier video quality might look like next, HappyHorse-1.0 is clearly relevant. If you are a team trying to choose a dependable production model today, you should still care more about access, stability, documentation, and deployment reality than about mystery-driven momentum.

What to watch next

If HappyHorse-1.0 turns into a real long-term option, the shift will probably become obvious through a few concrete signals.

Public release details

The most important development would be a verifiable release path: actual weights, a real repository, proper licensing terms, or a documented API. That is the line between fascination and practical adoption.

Independent testing

The second thing to watch is whether third parties start testing it in a reproducible way. Once outside users can compare quality, speed, consistency, prompt response, and failure modes under repeatable conditions, the conversation becomes much more useful.

Clear ownership and positioning

The third thing is simple transparency. Once the people or organization behind the model are clear, it becomes easier to evaluate incentives, release credibility, support expectations, and long-term reliability.

Until then, the model remains compelling, but not fully legible.

FAQ

What is HappyHorse-1.0?

HappyHorse-1.0 is an AI video model that has recently drawn attention because of strong public interest around its video-generation quality and its unusual emergence in ranking-driven discussion.

Is HappyHorse-1.0 open source?

Not in any fully confirmed, practically usable sense yet. There are public claims around openness, but those claims should be treated carefully until weights, code, licenses, and documentation are clearly accessible.

Can you use HappyHorse-1.0 right now?

That depends on what kind of access you mean. Public discussion suggests interest and visibility, but practical, stable, production-ready access appears much less certain than the hype around the model itself.

Who made HappyHorse-1.0?

That is still one of the biggest open questions. Part of the model’s current intrigue comes from the fact that public identity and attribution have not been as clear as many readers would expect from a major AI release.

Is HappyHorse-1.0 better than other AI video models?

It may be more competitive than many people expected, which is why it has become a real topic of discussion. But “better” depends on what you value most: blind preference, audio support, production access, workflow stability, or openness.

Final verdict

HappyHorse-1.0 is one of the more interesting AI video stories of the moment because it combines two things that do not usually arrive together: a strong quality signal and a weak transparency signal.

That is exactly why the model feels so important and so uncertain at the same time.

If you care about the cutting edge of AI video generation, it is worth watching closely. If you need a dependable tool you can actually build around today, you should stay more conservative until access, ownership, and verification become clearer.

Right now, the most honest summary is this: HappyHorse-1.0 may be real breakthrough material, but it is still a story in progress.